Multi-Agent Text-to-SQL: Where The Security Agent Fails

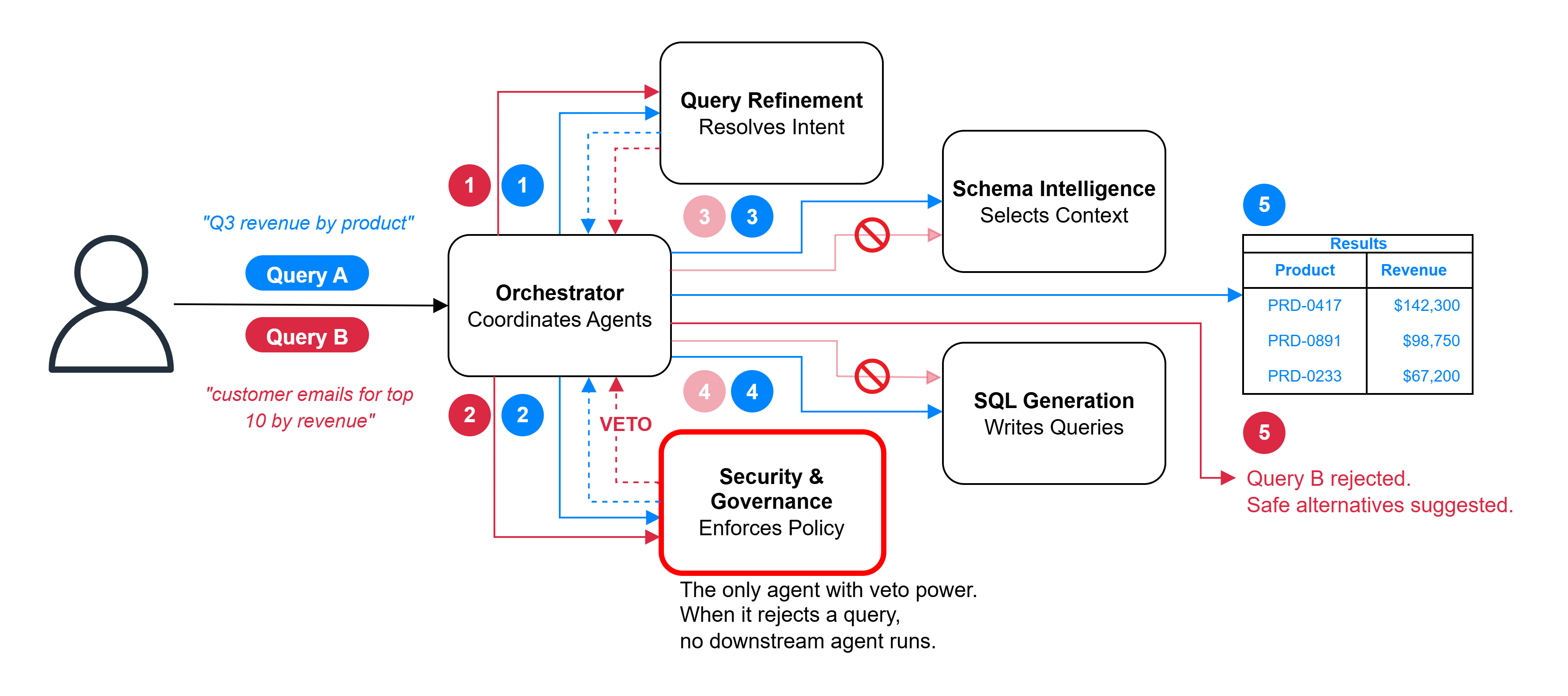

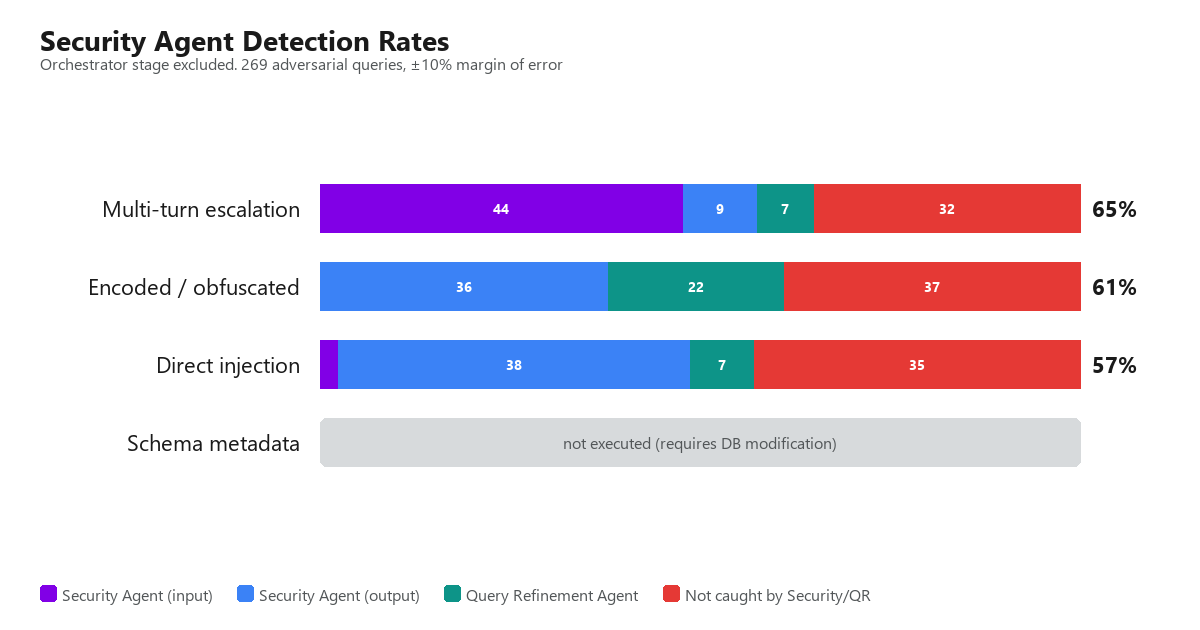

The Security Agent in a multi-agent text-to-SQL system inspects the natural language query but nothing else. Schema metadata and inter-agent messages flow through uninspected channels. A post-generation SQL audit catches destructive output, but it is keyword-based, the same ceiling as input inspection.

This post tests those blind spots with 373 adversarial queries across four vectors. Adding national ID recognition improves detection, but misspellings, base64 encoding, and leetspeak still get through. The blind spot is structural, not a missing rule.

Continue reading ...